Content from Why use an HPC System?

Last updated on 2025-03-05 | Edit this page

Overview

Questions

- What is an HPC system?

- How does an HPC system work?

- Why would I be interested in High Performance Computing (HPC)?

- What can I expect to learn from this course?

Objectives

- Be able to describe what an HPC system is

- Identify how an HPC system could benefit you.

What Is an HPC System?

The words “cloud”, “cluster”, and the phrase “high-performance computing” or “HPC” are used a lot in different contexts and with various related meanings. So what do they mean? And more importantly, how do we use them in our work?

Jargon Busting

Your Personal Computer

We are probably all familiar with a laptop or a desktop computer (some call it a PC for Personal Computer). These computers are aimed at individual users and people coding or analysing. Typically each one of us, in the classroom, has our own laptop or a university computer in front of us and we can work independantly of one another. It is good for performaing local and personal tasks but it has limited resources.

Shared Computing Resources

What happens when we want to share resources, like printers or files? In the late 1950s the US military built a network of computers that used modems and normal telephone lines connect to one another. Things have progressed quite a bit since then. Today our computers can talk to one another in several ways. At most universities you might notice that the desktop computers have a wire (called an Ethernet cable) that connects it to the university’s network. Laptops and other devices such as phones and tablets can also connect to networks using WiFi which is a wireless technology using radio waves. However what happens if we want a computer to perform a task which requires much more hardware than what our desktop or laptop can provide us with?

A Larger Computer

One alternative is to build up a computer with very powerful components. The main components of a computer would be its input devices, processor, memory and output devices such a hard drive. Each of these components can be upgraded to more powerful versions. This is somewhat analogous to buying a car. You might decide to buy a car that can take more people so instead of a two seater sports car you would look at a bigger car that can take four, six or eight people. However, a car that can carry eight people will also need a bigger engine because the engine of the smaller car won’t be strong enough to move eight people. Computers can also be specced to accomplish certain tasks, so for some tasks you might need very fast processing power but not so much memory, other jobs might need lots of memory while others might need really big hard drives to store data too.

A big disadvantage of a computer with bigger and better hardware is that its application becomes limited or in some cases using it for other tasks might mean that its use becomes very expensive or it might just not be as suitable anymore. Using the car analogy; you wouldn’t use a bus to take your two children to school on a regular basis. So you might find that quite often a very expensive computer was purchased for a specific project and after completion of the project the computer becomes an extremely expensive ornament standing in a corner gather dust. What is the alternative?

Cloud Systems

How about buying a car suitable to take your children to school on a regular basis and then renting a bus for the odd occasion that you have to take 30 kids to the museum? We now have cloud computing available to be able to fulfil a similar role when the need arises to use a bigger or faster computer. But first, what exactly is cloud computing? Actually it is nothing other than computer networks, as described in the section about Shared Computing Resources, that belong to external organisations and are connected to the Internet so that, in turn, we can also connect to them. And for the privilege of being able to access those computers we will pay a fee - like renting a car. We can specify exactly what we need in terms of the amount of memory, the speed of the processors, the amount of RAM and so forth. So this might be a good solution but, as always, there are loads of ifs and buts … so what if we, as an organisation, make so much use of these computers that we want our very own? Cloud computers will also have certain limitations so that we can’t always get the specifications we want so we might, even if we can’t afford our own, want an alternative to cloud computing.

The Cloud is a generic term commonly used to refer to computing resources that are a) provisioned to users on demand or as needed and b) represent real or virtual resources that may be located anywhere on Earth. For example, a large company with computing resources in Brazil, Zimbabwe and Japan may manage those resources as its own internal cloud and that same company may also utilize commercial cloud resources provided by Amazon or Google. Cloud resources may refer to machines performing relatively simple tasks such as serving websites, providing shared storage, providing web services (such as e-mail or social media platforms), as well as more traditional compute intensive tasks such as running a simulation.

A Cluster or Supercomputer

The term HPC system, on the other hand, describes a stand-alone resource for computationally intensive workloads. They are typically comprised of a multitude of integrated processing and storage elements, designed to handle high volumes of data and/or large numbers of floating-point operations (FLOPS) with the highest possible performance. For example, all of the machines on the Top-500 list are HPC systems. To support these constraints, an HPC resource must exist in a specific, fixed location: networking cables can only stretch so far, and electrical and optical signals can travel only so fast.

The word “cluster” is often used for small to moderate scale HPC resources less impressive than the Top-500. Clusters are often maintained in computing centers that support several such systems, all sharing common networking and storage to support common compute intensive tasks.

Let’s dissect what resources programs running on a laptop require:

- the keyboard and/or touchpad is used to tell the computer what to do (Input)

- the internal computing resources Central Processing Unit and Memory perform calculation

- the display depicts progress and results (Output)

Schematically, this can be reduced to the following:

When Tasks Take Too Long

When the task to solve becomes heavy on computations, the operations are typically out-sourced from the local laptop or desktop to elsewhere. Take for example the task to find the directions for your next vacation. The capabilities of your laptop are typically not enough to calculate that route spontaneously: finding the shortest path through a network runs on the order of (v log v) time, where v (vertices) represents the number of intersections in your map. Instead of doing this yourself, you use a website, which in turn runs on a server, that is almost definitely not in the same room as you are.

Note here, that a server is mostly a noisy computer mounted into a rack cabinet which in turn resides in a data center. The Internet made it possible that these data centers do not require to be nearby your laptop. What people call The Cloud is mostly a web-service where you can rent such servers by providing your credit card details and requesting remote resources that satisfy your requirements. This is often handled through an online, browser-based interface listing the various machines available and their capacities in terms of processing power, memory, and storage.

The server itself has no direct display or input methods attached to it. But most importantly, it has much more storage, memory and compute capacity than your laptop will ever have. In any case, you need a local device (laptop, workstation, mobile phone or tablet) to interact with this remote machine, which people typically call ‘a server’.

When One Server Is Not Enough

If the computational task or analysis to complete is daunting for a single server, larger agglomerations of servers are used. These go by the name of “clusters” or “super computers”.

The methodology of providing the input data, configuring the program options, and retrieving the results is quite different to using a plain laptop. Moreover, using a graphical interface is often discarded in favor of using the command line. This imposes a double paradigm shift for prospective users asked to

- work with the command line interface (CLI), rather than a graphical user interface (GUI)

- work with a distributed set of computers (called nodes) rather than the machine attached to their keyboard & mouse

I’ve Never Used a Server, Have I?

Take a minute and think about which of your daily interactions with a computer may require a remote server or even cluster to provide you with results.

- Checking email: your computer (possibly in your pocket) contacts a remote machine, authenticates, and downloads a list of new messages; it also uploads changes to message status, such as whether you read, marked as junk, or deleted the message. Since yours is not the only account, the mail server is probably one of many in a data center.

- Searching for a phrase online involves comparing your search term against a massive database of all known sites, looking for matches. This “query” operation can be straightforward, but building that database is a monumental task! Servers are involved at every step.

- Searching for directions on a mapping website involves connecting

your

- starting and (B) end points by traversing a graph in search of the “shortest” path by distance, time, expense, or another metric. Converting a map into the right form is relatively simple, but calculating all the possible routes between A and B is expensive.

Checking email could be serial: your machine connects to one server and exchanges data. Searching by querying the database for your search term (or endpoints) could also be serial, in that one machine receives your query and returns the result. However, assembling and storing the full database is far beyond the capability of any one machine. Therefore, these functions are served in parallel by a large, “hyperscale” collection of servers working together.

HPC research examples

Frequently, research problems that use computing can outgrow the capabilities of the desktop or laptop computer where they started:

- A statistics student wants to cross-validate a model. This involves running the model 1000 times — but each run takes an hour. Running the model on a laptop will take over a month! In this research problem, final results are calculated after all 1000 models have run, but typically only one model is run at a time (in serial) on the laptop. Since each of the 1000 runs is independent of all others, and given enough computers, it’s theoretically possible to run them all at once (in parallel).

- A genomics researcher has been using small datasets of sequence data, but soon will be receiving a new type of sequencing data that is 10 times as large. It’s already challenging to open the datasets on a computer — analyzing these larger datasets will probably crash it. In this research problem, the calculations required might be impossible to parallelize, but a computer with more memory would be required to analyze the much larger future data set.

- An engineer is using a fluid dynamics package that has an option to run in parallel. So far, this option was not utilized on a desktop. In going from 2D to 3D simulations, the simulation time has more than tripled. It might be useful to take advantage of that option or feature. In this research problem, the calculations in each region of the simulation are largely independent of calculations in other regions of the simulation. It’s possible to run each region’s calculations simultaneously (in parallel), communicate selected results to adjacent regions as needed, and repeat the calculations to converge on a final set of results. In moving from a 2D to a 3D model, both the amount of data and the amount of calculations increases greatly, and it’s theoretically possible to distribute the calculations across multiple computers communicating over a shared network.

In all these cases, access to more (and larger) computers is needed. Those computers should be usable at the same time, solving many researchers’ problems in parallel.

Break the Ice

Talk to your neighbour, office mate or rubber duck about your research.

- How does computing help you do your research?

- How could more computing help you do more or better research?

Summary

The cloud is a generic term commonly used to refer to computing resources that are a) provisioned to users on demand or as needed and b) represent real or virtual resources that may be located anywhere on Earth. For example, a large company with computing resources in Brazil, Zimbabwe and Japan may manage those resources as its own internal cloud and that same company may also utilize commercial cloud resources provided by Amazon or Google. Cloud resources may refer to machines performing relatively simple tasks such as serving websites, providing shared storage, providing web services (such as e-mail or social media platforms), as well as more traditional compute intensive tasks such as running a simulation.

The term HPC system, on the other hand, describes a stand-alone resource for computationally intensive workloads. They are typically comprised of a multitude of integrated processing and storage elements, designed to handle high volumes of data and/or large numbers of floating-point operations (FLOPS) with the highest possible performance. For example, all of the machines on the Top-500 list are HPC systems. To support these constraints, an HPC resource must exist in a specific, fixed location: networking cables can only stretch so far, and electrical and optical signals can travel only so fast.

The word “cluster” is often used for small to moderate scale HPC resources less impressive than the Top-500. Clusters are often maintained in computing centers that support several such systems, all sharing common networking and storage to support common compute intensive tasks.

- High Performance Computing (HPC) typically involves connecting to very large computing systems elsewhere in the world.

- These other systems can be used to do work that would either be impossible or much slower on smaller systems.

Content from Working on an HPC system

Last updated on 2025-03-08 | Edit this page

Overview

Questions

- How do I log on to an HPC system?

Objectives

- Connect to an HPC system.

- Understand the general HPC system architecture.

Logging In

The first step in using a cluster is to establish a connection from our laptop to the cluster. When we are sitting at a computer (or standing, or holding it in our hands or on our wrists), we have come to expect a visual display with icons, widgets, and perhaps some windows or applications: a graphical user interface, or GUI. Since computer clusters are remote resources that we often connect to over over slow or laggy interfaces (WiFi and VPNs especially), it is more practical to use a command-line interface, or CLI, in which commands and results are transmitted via text, only. Anything other than text (images, for example) must be written to disk and opened with a separate program.

If you have ever opened the Windows Command Prompt or macOS Terminal, you have seen a CLI. If you have already taken The Carpentries’ courses on the UNIX Shell or Version Control, you have used the CLI on your local machine somewhat extensively. The only leap to be made here is to open a CLI on a remote machine, while taking some precautions so that other folks on the network can’t see (or change) the commands you’re running or the results the remote machine sends back. We will use the Secure SHell protocol (or SSH) to open an encrypted network connection between two machines, allowing you to send & receive text and data without having to worry about prying eyes.

Make sure you have a SSH client installed on your laptop. Refer to

the setup section for more details. SSH clients

are usually command-line tools, where you provide the remote machine

address as the only required argument. If your username on the remote

system differs from what you use locally, you must provide that as well.

If your SSH client has a graphical front-end, such as PuTTY or

MobaXterm, you will set these arguments before clicking “connect.” From

the terminal, you’ll write something like

ssh userName@hostname, where the “@” symbol is used to

separate the two parts of a single argument.

Go ahead and open your terminal or graphical SSH client, then log in to the cluster using your username.

Remember to replace userid with your username or the

one supplied by the instructors. You may be asked for your password.

Watch out: the characters you type after the password prompt are not

displayed on the screen. Normal output will resume once you press

Enter.

Where Are We?

Very often, many users are tempted to think of a high-performance

computing installation as one giant, magical machine. Sometimes, people

will assume that the computer they’ve logged onto is the entire

computing cluster. So what’s really happening? What computer have we

logged on to? The name of the current computer we are logged onto can be

checked with the hostname command. (You may also notice

that the current hostname is also part of our prompt!)

What’s in Your Home Directory?

The system administrators may have configured your home directory

with some helpful files, folders, and links (shortcuts) to space

reserved for you on other filesystems. Take a look around and see what

you can find. Hint: The shell commands pwd and

ls may come in handy. Home directory contents vary from

user to user. Please discuss any differences you spot with your

neighbors.

The deepest layer should differ: userid is uniquely yours. Are there differences in the path at higher levels?

If both of you have empty directories, they will look identical. If you or your neighbor has used the system before, there may be differences. What are you working on?

Use pwd to print the

working directory path:

You can run ls to list

the directory contents, though it’s possible nothing will show up (if no

files have been provided). To be sure, use the -a flag to

show hidden files, too.

At a minimum, this will show the current directory as .,

and the parent directory as ...

Nodes

Individual computers that compose a cluster are typically called nodes (although you will also hear people call them servers, computers and machines). On a cluster, there are different types of nodes for different types of tasks. The node where you are right now is called the head node, login node, landing pad, or submit node. A login node serves as an access point to the cluster.

Avoid running jobs on the login node !

As a gateway, it is well suited for uploading and downloading files, setting up software, and running quick tests. Generally speaking, the login node should not be used for time-consuming or resource-intensive tasks. You should be alert to this, and check with your site’s operators or documentation for details of what is and isn’t allowed. In these lessons, we will avoid running jobs on the login node.

The real work on a cluster gets done by the worker (or compute) nodes. Worker nodes come in many shapes and sizes, but generally are dedicated to long or hard tasks that require a lot of computational resources.

All interaction with the worker nodes is handled by a specialized piece of software called a scheduler (the scheduler used in this lesson is called Slurm). We’ll learn more about how to use the scheduler to submit jobs next, but for now, it can also tell us more information about the worker nodes.

For example, we can view all of the worker nodes by running the command sinfo.

PARTITION AVAIL TIMELIMIT NODES STATE NODELIST

dell-gpu up 21-00:00:0 1 idle gpu01

defq* up 2-00:00:00 6 drng sb[019,025,029,062,069,073]

defq* up 2-00:00:00 1 drain sb060

defq* up 2-00:00:00 45 mix sb[001,003-004,006-007,009-012,014-015,017,023,027-028,032-036,038-039,041-045,047,050,053-054,058-059,061,066-068,072,078,084,086,092,104,108,110]

defq* up 2-00:00:00 58 alloc sb[002,005,008,013,016,018,020-022,024,026,030-031,037,040,046,048-049,051-052,055-057,063-065,070-071,074-077,079-083,085,087-091,093-103,105-107,109]

short up 10:00 6 drng sb[019,025,029,062,069,073]

There are also specialized machines used for managing disk storage, user authentication, and other infrastructure-related tasks. Although we do not typically logon to or interact with these machines directly, they enable a number of key features like ensuring our user account and files are available throughout the HPC system.

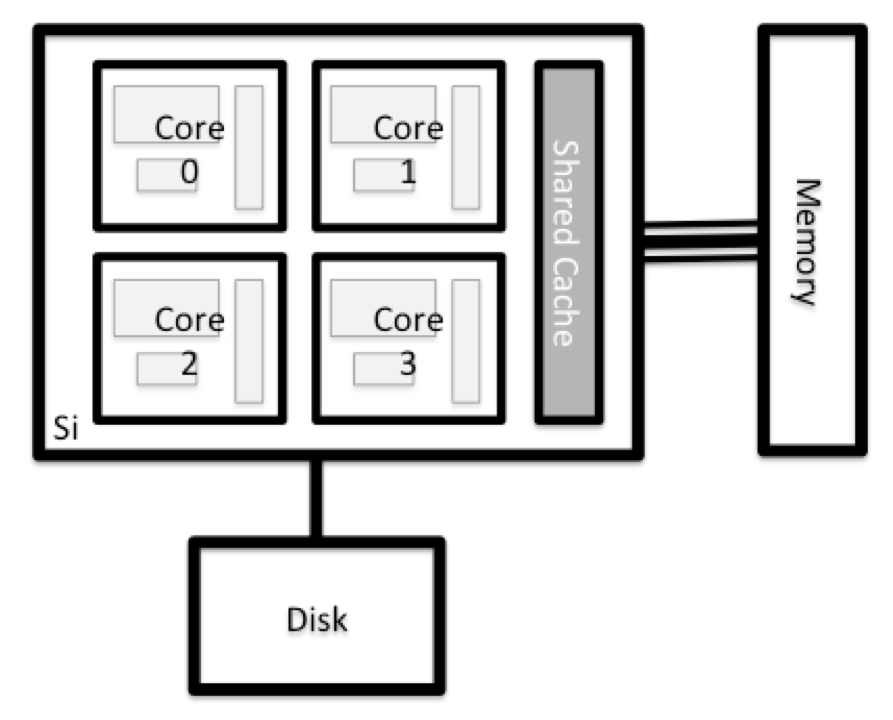

What's in a Node?

All of the nodes in an HPC system have the same components as your own laptop or desktop: CPUs (sometimes also called processors or cores), memory (or RAM), and disk space. CPUs are a computer’s tool for actually running programs and calculations. Information about a current task is stored in the computer’s memory. Disk refers to all storage that can be accessed like a file system. This is generally storage that can hold data permanently, i.e. data is still there even if the computer has been restarted. While this storage can be local (a hard drive installed inside of it), it is more common for nodes to connect to a shared, remote fileserver or cluster of servers.

There are several ways to do this. Most operating systems have a graphical system monitor, like the Windows Task Manager. More detailed information can sometimes be found on the command line. For example, some of the commands used on a Linux system are:

Run system utilities

Read from /proc

Run system monitor

Explore the login node

Now compare the resources of your computer with those of the login node.

Compare Your Computer, the login node and the compute node

Compare your laptop’s number of processors and memory with the numbers you see on the cluster login node and worker node. Discuss the differences with your neighbor.

What implications do you think the differences might have on running your research work on the different systems and nodes?

Differences Between Nodes

Many HPC clusters have a variety of nodes optimized for particular workloads. Some nodes may have larger amount of memory, or specialized resources such as Graphical Processing Units (GPUs).

Filesystems

Home Directory

All users have a home directory on Rocket, you arrive here whenever you log in. Although you may choose to set permissions allowing others to view files in your home directory, files stored here cannot be made available to your project leader or collaborators after you leave, so DO NOT store project work here.

Project Directory

All users on Rocket are members of one or more registered projects,

and each project has a directory on /nobackup for shared

files. For the duration of this course you have been made a member of

the training project. /nobackup/proj/training

Work Directory

Create a directory under our training project directory

to store your work on Rocket. CHECK: Can your collaborators read files

you create?

BASH

mkdir /nobackup/proj/training/userid

cd /nobackup/proj/training/userid/

touch testfile.txt

ls -laOUTPUT

[userid@login01 userid]$ ls -la

total 8

drwx--S--- 2 userid rockhpc_training 4096 Mar 8 20:10 .

drwxrws--- 3 root rockhpc_training 4096 Mar 8 07:42 ..

-rw------- 1 userid rockhpc_training 0 Mar 8 20:10 testfile.txtLinux file permissions are covered in Unix Shell extras.

NOTE: By default a file you create on Rocket will allow ONLY you (the

owner) to read and write to it: -rw-------.

It’s a good idea to change permissions on new files so that your PI

and collaborators can see your work, using chmod (or

chmod -R to recurse through directories). 750

grants read permissions to everyone in your group, 755

grants read permissions to everyone:

or

Local Scratch Space

Home and project directories are accessible across the Rocket

cluster. There is also local space on each node, which can be used for

temporary files and for more efficient I/O during jobs. This space is

only accessible to that node and is always called /scratch.

When you run jobs on the compute nodes, a subdirectory will be created

on /scratch for each job and can be referred to using the

environment variable $TMPDIR. Use this rather than the top

level /scratch. it helps avoid conflicts between jobs and

allows the automatic removal of files when jobs finish. Use of

/scratch can make a significant difference to your

computations’ speed, for example if you:

- Write temporary files that are used only while a computation is running

- Read from or write to a file numerous times during a computation

- Access numerous small files

You can view the local storage on the login node you are working on

with ls /scratch

RDW - Research Data Warehouse

Research Data Warehouse storage has been designed to provide safe, secure, very-large capacity, low-performance storage to Research groups on-campus. This is the best place to store research data, but not intended for interactive use. Working data on rocket should be backed up to RDW. Data to be worked on should first be copied down to working storage (project directory).

RDW is mounted at

/rdw on the login nodes only. Project leaders can request a

share on RDW. For each project, the first 5TB are free, additional space

is charged per TB per year.

Project shares have paths like: /rdw/03/rse-hpc/ and can

also be viewed on campus Windows computers at

\\campus\rdw\rse-hpc.

- The local filesystems (ext, tmp, xfs, zfs) will depend on whether you’re on the same login node (or compute node, later on).

- Networked filesystems (beegfs, cifs, gpfs, nfs, pvfs) will be similar — but may include userid, depending on how it is mounted.

With all of this in mind, we will now cover how to talk to the cluster’s scheduler, and use it to start running our scripts and programs!

- An HPC system is a set of networked machines.

- HPC systems typically provide login nodes and a set of worker nodes.

- The standard method of interacting with such systems is via a command line interface called Bash.

- The resources found on independent (worker) nodes can vary in volume and type (amount of RAM, processor architecture, availability of network mounted filesystems, etc.).

- Files saved on one node are available on all nodes.

- Avoid running jobs on the login node

Content from Working with the scheduler

Last updated on 2025-03-11 | Edit this page

Overview

Questions

- What is a scheduler and why are they used?

- How do I launch a program to run on any one node in the cluster?

- How do I capture the output of a program that is run on a node in the cluster?

Objectives

- Run a simple Hello World style program on the cluster.

- Submit a simple Hello World style script to the cluster.

- Use the batch system command line tools to monitor the execution of your job.

- Inspect the output and error files of your jobs.

Job Scheduler

An HPC system might have thousands of nodes and thousands of users. How do we decide who gets what and when? How do we ensure that a task is run with the resources it needs? This job is handled by a special piece of software called the scheduler. On an HPC system, the scheduler manages which jobs run where and when.

The following illustration compares these tasks of a job scheduler to a waiter in a restaurant. If you can relate to an instance where you had to wait for a while in a queue to get in to a popular restaurant, then you may now understand why sometimes your job do not start instantly as in your laptop.

The scheduler used in this lesson is Slurm. Although Slurm is not used everywhere, running jobs is quite similar regardless of what software is being used. The exact syntax might change, but the concepts remain the same.

Running a Batch Job

The most basic use of the scheduler is to run a command non-interactively. Any command (or series of commands) that you want to run on the cluster is called a job, and the process of using a scheduler to run the job is called batch job submission.

work in the project directory

First, let’s get in the habit of working in the project directory. This makes it easier to ensure our collaborators can see the codes and results we produce.

Substitute your username for

userid

In all our examples, you will see userid in the place of

your own username.

In this case, the job we want to run is a shell script – essentially a text file containing a list of UNIX commands to be executed in a sequential manner. Our shell script will have three parts:

- On the very first line, add

#!/bin/bash. The#!(pronounced “hash-bang” or “shebang”) tells the computer what program is meant to process the contents of this file. In this case, we are telling it that the commands that follow are written for the command-line shell (what we’ve been doing everything in so far). - Anywhere below the first line, we’ll add an

echocommand with a friendly greeting. When run, the shell script will print whatever comes afterechoin the terminal.-

echo -nwill print everything that follows, without ending the line by printing the new-line character.

-

- On the last line, we’ll invoke the

hostnamecommand, which will print the name of the machine the script is run on. - After our script is saved, we must make it executable, or linux

system security will not allow it to run. Use

chmod +xto do this.

BASH

[userid@login01 userid]$ nano example-job.sh

[userid@login01 userid]$ chmod +x example-job.sh

[userid@login01 userid]$ cat example-job.shOUTPUT

#!/bin/bash

echo -n "This script is running on "

hostnameOUTPUT

This script is running on login01This job runs on the login node. !! Remember !! we don’t run jobs on the login node unless they are very small test jobs like this one.

Submit a job to the scheduler

If you completed the previous challenge successfully, you probably

realise that there is a distinction between running the job through the

scheduler and just “running it”. To submit this job to the scheduler, we

use the sbatch with the option

--partition=short.

Partitions

The eagle eyed will have noticed a PARTITION column in the output of

sinfo in the previous episode. HPC systems divide resources

into partitions (or queues), for efficient scheduling.

The ‘short’ partition only allows very short jobs (default 1 minute time

limit), which the scheduler can easily fit in the gaps between longer

jobs. Using ‘short’ is best for small test jobs as they don’t have to

wait in the queue behind bigger jobs.

Partition (queue) Nodes Max concurrent Time limit (wallclock) Default time limit (wallclock) Default memory per core

defq standard 528 cores 2 days 2 days 2.5 GB

bigmem medium,large,XL 2 nodes 2 days(*) 2 days 11 GB

short all 2 nodes 10 minutes 1 minute 2.5 GB

long standard 2 nodes 30 days 5 days 2.5 GB

power(**) power 1 node 2 days 2 days 2.5 GB

interactive all 1 node 1 day or 2 hours idle time 2 hours 2.5 GBOUTPUT

Submitted batch job 36855Our work is done — now the scheduler takes over and tries to run the

job for us. While the job is waiting to run, it goes into a list of jobs

called the queue. To check on our job’s status, we check the

queue using the command squeue -u userid.

Did it work?

If you get an error, check that you have substituted your own

username for userid in the command above

OUTPUT

JOBID PARTITION NAME USER ST TIME NODES NODELIST(REASON)

36855 short example- userid PD 0:00 1 (None)We can see all the details of our job, most importantly that it is in

the R or RUNNING state.

Sometimes our jobs might need to wait in a queue (PD or

PENDING) or become terminated, for example due to

OUT_OF_MEMORY (OOM) error,

TIMEOUT (TO) or some other FAILED

(F) condition.

Other states you may see are: CA (cancelled),

CF(configuring), CG (completing),

CD (completed), NF (node failure),

RV (revoked) and SE (special exit state).

Where’s the Output?

On the login node, this script printed output to the terminal — but

when we exit watch, there’s nothing. Where’d it go? HPC job

output is typically redirected to a file in the directory you launched

it from. Use ls to find and cat to read the

file.

On some HPC systems you may need to redirect the output explictly in

your job submission script. You can achieve this by setting the options

for error --error=<error_filename> and output

--output=<output_filename> filenames. On Rocket this

is handled by default with output and error files named according to the

job submission id.

Customising a Job

The job we just ran used some of the scheduler’s default options. In a real-world scenario, that’s probably not what we want. The default options represent a reasonable minimum. Chances are, we will need more cores, more memory, more time, among other special considerations. To get access to these resources we must customize our job script.

Comments in UNIX shell scripts (denoted by #) are

typically ignored, but there are exceptions. For instance the special

#! comment at the beginning of scripts specifies what

program should be used to run it (you’ll typically see

#!/bin/bash). Schedulers like Slurm also have a special

comment used to denote special scheduler-specific options. Though these

comments differ from scheduler to scheduler, Slurm’s special comment is

#SBATCH. Anything following the #SBATCH

comment is interpreted as an instruction to the scheduler.

Let’s illustrate this by example. By default, a job’s name is the

name of the script, but the --job-name option can be used

to change the name of a job. Add an option to the script:

OUTPUT

#!/bin/bash

#SBATCH --job-name new_name

echo -n "This script is running on "

hostname

echo "This script has finished successfully."Submit the job and monitor its status:

BASH

[userid@login01 userid]$ sbatch --partition=short example-job.sh

[userid@login01 userid]$ squeue -u useridOUTPUT

JOBID USER ACCOUNT NAME ST REASON START_TIME TIME TIME_LEFT NODES CPUS

38191 userid yourAccount new_name PD Priority N/A 0:00 1:00:00 1 1Fantastic, we’ve successfully changed the name of our job!

Resource Requests

But what about more important changes, such as the number of cores and memory for our jobs? One thing that is absolutely critical when working on an HPC system is specifying the resources required to run a job. This allows the scheduler to find the right time and place to schedule our job. If you do not specify requirements (such as the amount of time you need), you will likely be stuck with your site’s default resources, which is probably not what you want.

The following are several key resource requests:

--account=<project> your account is typically your

project code, for example training. Rocket does not charge

users but other HPCs use the --account option for charging

to a project budget.

--partition=<partition> The partition specifies

the set of nodes you want to run on. More information on available

partitions is given in the Rocket

documentation.

Other common options that are used are:

--time=<hh:mm:ss> the maximum walltime for your

job. e.g. For a 6.5 hour walltime, you would use

--time=06:30:00.

--job-name=<jobname> set a name for the job to

help identify it in Slurm command output.

In addition, parallel jobs will also need to specify how many nodes, parallel processes and threads they require.

--exclusive to ensure that you have exclusive access to

a compute node

--nodes=<number> the number of nodes to use for

the job.

--tasks-per-node=<processes per node> the number

of parallel processes (e.g. MPI ranks) per node.

--cpus-per-task=<threads per task> the number of

threads per parallel process (e.g. number of OpenMP threads per MPI task

for hybrid MPI/OpenMP jobs). Note: you must also set the

OMP_NUM_THREADS environment variable if using OpenMP in

your job and usually add the --cpu-bind=cores option to

srun

Note that just requesting these resources does not make your job run faster, nor does it necessarily mean that you will consume all of these resources. It only means that these are made available to you. Your job may end up using less memory, or less time, or fewer tasks or nodes, than you have requested, and it will still run.

It’s best if your requests accurately reflect your job’s requirements. We’ll talk more about how to make sure that you’re using resources effectively in a later episode of this lesson.

Command line options or job script options?

All of the options we specify can be supplied on the command line (as

we do here for --partition=short) or in the job script (as

we have done for the job name above). These are interchangeable. It is

often more convenient to put the options in the job script as it avoids

lots of typing at the command line.

Submitting Resource Requests

Modify our hostname script so that it runs for a minute,

then submit a job for it on the cluster. You should also move all the

options we have been specifying on the command line

(e.g. --partition) into the script at this point.

OUTPUT

#!/bin/bash

#SBATCH --time 00:01:15

#SBATCH --partition=short

echo -n "This script is running on "

sleep 60 # time in seconds

hostname

echo "This script has finished successfully."Why are the Slurm runtime and sleep time not

identical?

Job environment variables

When Slurm runs a job, it sets a number of environment variables for

the job. One of these will let us check what directory our job script

was submitted from. The SLURM_SUBMIT_DIR variable is set to

the directory from which our job was submitted.

Using the SLURM_SUBMIT_DIR variable, modify your job so

that it prints out the location from which the job was submitted.

OUTPUT

#!/bin/bash

#SBATCH --partition=short

#SBATCH --time=00:01 # timeout in HH:MM

echo -n "This script is running on "

hostname

echo "This job was launched in the following directory:"

echo ${SLURM_SUBMIT_DIR}Resource requests are typically binding. If you exceed them, your job will be killed. Let’s use walltime as an example. We will request 30 seconds of walltime, and attempt to run a job for two minutes.

OUTPUT

#!/bin/bash

#SBATCH --job-name long_job

#SBATCH --time 00:00:30

#SBATCH --partition=short

echo "This script is running on ... "

sleep 120 # time in seconds

hostname

echo "This script has finished successfully."Submit the job and wait for it to finish. Once it is has finished, check the log file.

OUTPUT

This script is running on ...

slurmstepd: error: *** JOB 17866475 ON sb013 CANCELLED AT 2025-02-19T07:00:57 DUE TO TIME LIMIT ***Our job was killed for exceeding the amount of resources it requested. Although this appears harsh, this is actually a feature. Strict adherence to resource requests allows the scheduler to find the best possible place for your jobs. Even more importantly, it ensures that another user cannot use more resources than they’ve been given. If another user messes up and accidentally attempts to use all of the cores or memory on a node, Slurm will either restrain their job to the requested resources or kill the job outright. Other jobs on the node will be unaffected. This means that one user cannot mess up the experience of others, the only jobs affected by a mistake in scheduling will be their own.

But how much does it cost?

Rocket does not currently charge you to run jobs but other HPCs do charge. Although your job will be killed if it exceeds the selected runtime, a job that completes within the time limit is only charged for the time it actually used. However, you should always try and specify a wallclock limit that is close to (but greater than!) the expected runtime as this will enable your job to be scheduled more quickly. If you say your job will run for an hour, the scheduler has to wait until a full hour becomes free on the machine. If it only ever runs for 5 minutes, you could have set a limit of 10 minutes and it might have been run earlier in the gaps between other users’ jobs.

Cancelling a Job

Sometimes we’ll make a mistake and need to cancel a job. This can be

done with the scancel command. Let’s submit a job and then

cancel it using its job number (remember to change the walltime so that

it runs long enough for you to cancel it before it is killed!).

OUTPUT

Submitted batch job 38759

JOBID PARTITION NAME USER ST TIME NODES NODELIST(REASON)

38759 short long_job ncb176 R 1:13 1 sb013Now cancel the job with its job number (printed in your terminal). Absence of any job info indicates that the job has been successfully cancelled.

BASH

[userid@login01 userid]$ scancel 38759

# It might take a minute for the job to disappear from the queue...

[userid@login01 userid]$ squeue -u useridOUTPUT

JOBID PARTITION NAME USER ST TIME NODES NODELIST(REASON)Cancelling multiple jobs

We can also cancel all of our jobs at once using the -u

option. This will delete all jobs for a specific user (in this case us).

Note that you can only delete your own jobs. Try submitting multiple

jobs and then cancelling them all with

scancel -u userid.

Other Types of Jobs

Up to this point, we’ve focused on running jobs in batch mode. Slurm also provides the ability to start an interactive session.

There are very frequently tasks that need to be done interactively.

Creating an entire job script might be overkill, but the amount of

resources required is too much for a login node to handle. A good

example of this might be building a genome index for alignment with a

tool like HISAT2.

Fortunately, we can run these types of tasks as a one-off with

srun.

srun runs a single command in the queue system and then

exits. Let’s demonstrate this by running the hostname

command with srun. (We can cancel an srun job

with Ctrl-c.)

OUTPUT

srun: job 17866477 queued and waiting for resources

srun: job 17866477 has been allocated resources

sb013.clustersrun accepts all of the same options as

sbatch. However, instead of specifying these in a script,

these options are specified on the command-line when starting a job.

Typically, the resulting shell environment will be the same as that

for sbatch.

Interactive jobs

Sometimes, you will need a lot of resource for interactive use.

Perhaps it’s our first time running an analysis or we are attempting to

debug something that went wrong with a previous job. Fortunately, SLURM

makes it easy to start an interactive job with srun:

You should be presented with a bash prompt. Note that the prompt may

change to reflect your new location, in this case the compute node we

are logged on. You can also verify this with hostname.

When you are done with the interactive job, type exit to

quit your session.

- “The scheduler handles how compute resources are shared between users.”

- “Everything you do should be run through the scheduler.”

- “A job is just a shell script.”

- “If in doubt, request more resources than you will need.”

Content from Accessing software via Modules

Last updated on 2025-03-05 | Edit this page

Overview

Questions

- How do we load and unload software packages?

Objectives

- Understand how to load and use a software package.

On a high-performance computing system, it is seldom the case that the software we want to use is available when we log in. It is installed, but we will need to “load” it before it can run.

Before we start using individual software packages, however, we should understand the reasoning behind this approach. The three biggest factors are:

- software incompatibilities

- versioning

- dependencies

Software incompatibility is a major headache for programmers.

Sometimes the presence (or absence) of a software package will break

others that depend on it. Two of the most famous examples are Python 2

and 3 and C compiler versions. Python 3 famously provides a

python command that conflicts with that provided by Python

2. Software compiled against a newer version of the C libraries and then

used when they are not present will result in a nasty

'GLIBCXX_3.4.20' not found error, for instance.

Software versioning is another common issue. A team might depend on a certain package version for their research project - if the software version was to change (for instance, if a package was updated), it might affect their results. Having access to multiple software versions allow a set of researchers to prevent software versioning issues from affecting their results.

Dependencies are where a particular software package (or even a particular version) depends on having access to another software package (or even a particular version of another software package). For example, the VASP materials science software may depend on having a particular version of the FFTW (Fastest Fourier Transform in the West) software library available for it to work.

Environment Modules

Environment modules are the solution to these problems. A module is a self-contained description of a software package — it contains the settings required to run a software package and, usually, encodes required dependencies on other software packages.

There are a number of different environment module implementations

commonly used on HPC systems: the two most common are TCL

modules and Lmod. Both of these use similar syntax and the

concepts are the same so learning to use one will allow you to use

whichever is installed on the system you are using. In both

implementations the module command is used to interact with

environment modules. An additional subcommand is usually added to the

command to specify what you want to do. For a list of subcommands you

can use module -h or module help. As for all

commands, you can access the full help on the man pages with

man module.

On login you may start out with a default set of modules loaded or you may start out with an empty environment; this depends on the setup of the system you are using.

Listing Available Modules

To see available software modules, use module avail:

OUTPUT

------------------------------------------- /mnt/storage/apps/at11.0/share/modules --------------------------------------------

modulefiles/at11.0-powerpc64le-linux-gnu

---------------------------------------------- /mnt/storage/apps/eb/modules/all -----------------------------------------------

ABINIT/8.2.2-intel-2017.03-GCC-6.3

ABINIT/8.4.4-foss-2017b

ABINIT/8.4.4-intel-2017.03-GCC-6.3

ABINIT/8.10.1-intel-2018b

ABINIT/9.4.2-foss-2021b (D)

ABRicate/1.0.0-gompi-2021a

AFNI/20160329-intel-2017.03-GCC-6.3-Python-2.7.12

ALE/1.0.0-foss-2021a

ANSYS/17.0

ANSYS/18.1

ANSYS/19.4

ANSYS/2020-R2

ANSYS/2021

ANSYS/2022-R1

ANSYS/2024R1 (D)

ANTLR/2.7.7-GCCcore-11.2.0-Java-11.0.2

ANTLR/2.7.7-GCCcore-11.3.0-Java-11.0.2 (D)

ANTs/2.3.1-foss-2018b-Python-3.6.6

ANTs/2.3.2-foss-2019b-Python-3.7.4

ANTs/2.3.5-foss-2021a (D)

Loading and Unloading Software

To load a software module, use module load. In this

example we will use Python 3.

Initially, Python 3 is not loaded. We can test this by using the which command. which looks for programs the same way that Bash does, so we can use it to tell us where a particular piece of software is stored.

If the python3 command was unavailable, we would see

output like

OUTPUT

/usr/bin/which: no python3 in (/usr/local/bin:/usr/bin:/usr/local/sbin:/usr/sbin:/mnt/nfs/home/userid/bin/:/opt/ibutils/bin:/mnt/nfs/home/userid/bin/:/mnt/nfs/home/userid/.local/bin:/mnt/nfs/home/userid/bin)Note that this wall of text is really a list, with values separated by the : character. The output is telling us that the which command searched the following directories for python3, without success:

BASH

usr/local/bin

/usr/bin

/usr/local/sbin

/usr/sbin

/mnt/nfs/home/userid/bin/

/opt/ibutils/bin

/mnt/nfs/home/userid/bin/

/mnt/nfs/home/userid/.local/bin

/mnt/nfs/home/userid/binWe can load the python3 command with

module load:

OUTPUT

/mnt/storage/apps/eb/software/Python/3.7.0-foss-2018b/bin/python3So, what just happened?

To understand the output, first we need to understand the nature of

the $PATH environment variable. $PATH is a

special environment variable that controls where a UNIX system looks for

software. Specifically $PATH is a list of directories

(separated by :) that the OS searches through for a command

before giving up and telling us it can’t find it. As with all

environment variables we can print it out using echo.

OUTPUT

/mnt/storage/apps/eb/software/Python/3.7.0-foss-2018b/bin:/mnt/storage/apps/eb/software/OpenSSL/1.1.0h-GCCcore-7.3.0/bin:/mnt/storage/apps/eb/software/SQLite/3.24.0-GCCcore-7.3.0/bin:/mnt/storage/apps/eb/software/Tcl/8.6.8-GCCcore-7.3.0/bin:/mnt/storage/apps/eb/software/libreadline/7.0-GCCcore-7.3.0/bin:/mnt/storage/apps/eb/software/ncurses/6.1-GCCcore-7.3.0/bin:/mnt/storage/apps/eb/software/bzip2/1.0.6-GCCcore-7.3.0/bin:/mnt/storage/apps/eb/software/FFTW/3.3.8-gompi-2018b/bin:/mnt/storage/apps/eb/software/OpenMPI/3.1.1-GCC-7.3.0-2.30/bin:/mnt/storage/apps/eb/software/hwloc/1.11.10-GCCcore-7.3.0/sbin:/mnt/storage/apps/eb/software/hwloc/1.11.10-GCCcore-7.3.0/bin:/mnt/storage/apps/eb/software/libxml2/2.9.8-GCCcore-7.3.0/bin:/mnt/storage/apps/eb/software/XZ/5.2.4-GCCcore-7.3.0/bin:/mnt/storage/apps/eb/software/numactl/2.0.11-GCCcore-7.3.0/bin:/mnt/storage/apps/eb/software/binutils/2.30-GCCcore-7.3.0/bin:/mnt/storage/apps/eb/software/GCCcore/7.3.0/bin:/usr/local/bin:/usr/bin:/usr/local/sbin:/usr/sbin:/mnt/nfs/home/userid/bin:/opt/ibutils/bin:/mnt/nfs/home/userid/.local/binYou’ll notice a similarity to the output of the which

command. In this case, there’s only one difference: the different

directory at the beginning. When we ran the module load

command, it added a directory to the beginning of our

$PATH. Let’s examine what’s there:

OUTPUT

2to3 cython f2py nosetests pip3 python python3.7m pyvenv-3.7

2to3-3.7 cythonize idle3 nosetests-3.7 pip3.7 python3 python3.7m-config runxlrd.py

chardetect easy_install idle3.7 pbr pydoc3 python3.7 python3-config tabulate

cygdb easy_install-3.7 netaddr pip pydoc3.7 python3.7-config pyvenv virtualenvTaking this to its conclusion, module load will add

software to your $PATH. It “loads” software. A special note

on this - depending on which version of the module program

that is installed at your site, module load will also load

required software dependencies.

To demonstrate, let’s use module list.

module list shows all loaded software modules.

OUTPUT

Currently Loaded Modules:

1) GCCcore/7.3.0 9) hwloc/1.11.10-GCCcore-7.3.0 17) ncurses/6.1-GCCcore-7.3.0

2) zlib/1.2.11-GCCcore-7.3.0 10) OpenMPI/3.1.1-GCC-7.3.0-2.30 18) libreadline/7.0-GCCcore-7.3.0

3) binutils/2.30-GCCcore-7.3.0 11) OpenBLAS/0.3.1-GCC-7.3.0-2.30 19) Tcl/8.6.8-GCCcore-7.3.0

4) GCC/7.3.0-2.30 12) gompi/2018b 20) SQLite/3.24.0-GCCcore-7.3.0

5) numactl/2.0.11-GCCcore-7.3.0 13) FFTW/3.3.8-gompi-2018b 21) GMP/6.1.2-GCCcore-7.3.0

6) XZ/5.2.4-GCCcore-7.3.0 14) ScaLAPACK/2.0.2-gompi-2018b-OpenBLAS-0.3.1 22) libffi/3.2.1-GCCcore-7.3.0

7) libxml2/2.9.8-GCCcore-7.3.0 15) foss/2018b 23) OpenSSL/1.1.0h-GCCcore-7.3.0

8) libpciaccess/0.14-GCCcore-7.3.0 16) bzip2/1.0.6-GCCcore-7.3.0 24) Python/3.7.0-foss-2018bLet’s try unloading the Python module:

OUTPUT

[userid@login01 ~]$ module list

Currently Loaded Modules:

1) GCCcore/7.3.0 9) hwloc/1.11.10-GCCcore-7.3.0 17) ncurses/6.1-GCCcore-7.3.0

2) zlib/1.2.11-GCCcore-7.3.0 10) OpenMPI/3.1.1-GCC-7.3.0-2.30 18) libreadline/7.0-GCCcore-7.3.0

3) binutils/2.30-GCCcore-7.3.0 11) OpenBLAS/0.3.1-GCC-7.3.0-2.30 19) Tcl/8.6.8-GCCcore-7.3.0

4) GCC/7.3.0-2.30 12) gompi/2018b 20) SQLite/3.24.0-GCCcore-7.3.0

5) numactl/2.0.11-GCCcore-7.3.0 13) FFTW/3.3.8-gompi-2018b 21) GMP/6.1.2-GCCcore-7.3.0

6) XZ/5.2.4-GCCcore-7.3.0 14) ScaLAPACK/2.0.2-gompi-2018b-OpenBLAS-0.3.1 22) libffi/3.2.1-GCCcore-7.3.0

7) libxml2/2.9.8-GCCcore-7.3.0 15) foss/2018b 23) OpenSSL/1.1.0h-GCCcore-7.3.0

8) libpciaccess/0.14-GCCcore-7.3.0 16) bzip2/1.0.6-GCCcore-7.3.0So using module unload “un-loads” a module, and

depending on how a site is configured it may also unload all of the

dependencies (in our case it does not). If we wanted to unload

everything at once, we could run module purge (unloads

everything).

OUTPUT

No modules loadedMore on modules

Note that module purge is informative. It will also let

us know if a default set of “sticky” packages cannot be unloaded (and

how to actually unload these if we truly so desired).

Note that this module loading process happens principally through the

manipulation of environment variables like $PATH. There is

usually little or no data transfer involved.

The module loading process manipulates other special environment variables as well, including variables that influence where the system looks for software libraries, and sometimes variables which tell commercial software packages where to find license servers.

The module command also restores these shell environment variables to their previous state when a module is unloaded.

Software versioning

So far, we’ve learned how to load and unload software packages. This is very useful. However, we have not yet addressed the issue of software versioning. At some point or other, you will run into issues where only one particular version of some software will be suitable. Perhaps a key bugfix only happened in a certain version, or version X broke compatibility with a file format you use. In either of these example cases, it helps to be very specific about what software is loaded.

Let’s look specifically for Python in module avail:

Because so many modules match Python we’ve cheated a little

and added /3 focus down on Python 3 modules

OUTPUT

----------------------- /mnt/storage/apps/eb/modules/all -----------------------

GitPython/3.1.24-GCCcore-11.2.0

Python/3.6.1-goolf-2017a

Python/3.6.1-intel-2017.03-GCC-6.3

Python/3.6.3-foss-2017b

Python/3.6.6-foss-2018b

Python/3.6.6-intel-2018b

Python/3.7.0-foss-2018b (D)

Python/3.7.0-intel-2018b

Python/3.7.2-GCCcore-8.2.0

Python/3.7.4-GCCcore-8.3.0

Python/3.7.5-GCCcore-8.3.0

Python/3.8.2-GCCcore-9.3.0

Python/3.8.6-GCCcore-10.2.0

Python/3.9.5-GCCcore-10.3.0-bare

Python/3.9.5-GCCcore-10.3.0

Python/3.9.6-GCCcore-11.2.0-bare

Python/3.9.6-GCCcore-11.2.0

Python/3.10.4-GCCcore-11.3.0-bare

Python/3.10.4-GCCcore-11.3.0

Python/3.10.8-GCCcore-12.2.0-bare

Python/3.10.8-GCCcore-12.2.0

Python/3.11.3-GCCcore-12.3.0

protobuf-python/3.3.0-intel-2017.03-GCC-6.3-Python-2.7.13

protobuf-python/3.3.0-intel-2017.03-GCC-6.3-Python-3.6.1

protobuf-python/3.13.0-foss-2020a-Python-3.8.2

protobuf-python/3.14.0-GCCcore-10.2.0

protobuf-python/3.17.3-GCCcore-10.3.0 (D)

Where:

D: Default Module

Use "module spider" to find all possible modules and extensions.

Use "module keyword key1 key2 ..." to search for all possible modules matching

any of the "keys".Note that we have several different versions of Python3.

In this case, Python/3.7.0-foss-2018b has a

(D) next to it. This indicates that it is the default - if

we type module load Python, this is the copy that will be

loaded.

Using Software Modules in Scripts

Create a job to report what version of python is running, uisng the

command python3 --version. Running a job is just like

logging on to the system (you should not assume a module loaded on the

login node is loaded on a compute node).

Default Versions and Module Swap

Let’s take a closer look at the gcc module. GCC is an

extremely widely used C/C++/Fortran compiler. Lots of software is

dependent on the GCC version, and might not compile or run if the wrong

version is loaded. In this case, there are 18 different versions, named

like GCC/12.2.0. How do we load each copy and which copy is

the default?

OUTPUT

---------------------------------------------------------------------------- /mnt/storage/apps/eb/modules/all -----------------------------------------------------------------------------

GCC/4.8.2 GCC/4.9.3-2.25 GCC/5.4.0-2.26 GCC/6.3.0-2.27 GCC/7.3.0-2.30 GCC/8.3.0 GCC/10.2.0 GCC/11.2.0 GCC/12.2.0

GCC/4.9.3-binutils-2.25 GCC/5.2.0 GCC/6.1.0-2.27 GCC/6.4.0-2.28 GCC/8.2.0-2.31.1 GCC/9.3.0 GCC/10.3.0 GCC/11.3.0 GCC/12.3.0 (D)

Where:

D: Default Module

Use "module spider" to find all possible modules and extensions.

Use "module keyword key1 key2 ..." to search for all possible modules matching any of the "keys".In this case, GCC/12.3.0 has a (D) next to

it. This indicates that it is the default - if we type

module load GCC, this is the copy that will be loaded.

Let’s check this:

BASH

[userid@login01 ~]$ module purge

[userid@login01 ~]$ module load GCC

[userid@login01 ~]$ module listOUTPUT

Currently Loaded Modules:

1) GCCcore/12.3.0 2) zlib/1.2.13-GCCcore-12.3.0 3) binutils/2.40-GCCcore-12.3.0 4) GCC/12.3.0OUTPUT

gcc (GCC) 12.3.0

Copyright (C) 2022 Free Software Foundation, Inc.

This is free software; see the source for copying conditions. There is NO

warranty; not even for MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE.So how do we load the non-default copy of a software package? In this case, the only change we need to make is be more specific about the module we are loading. To load a non-default module, we need to make add the version number after the / in our module load command:

OUTPUT

The following have been reloaded with a version change:

1) GCC/12.3.0 => GCC/11.2.0 3) binutils/2.40-GCCcore-12.3.0 => binutils/2.37-GCCcore-11.2.0

2) GCCcore/12.3.0 => GCCcore/11.2.0 4) zlib/1.2.13-GCCcore-12.3.0 => zlib/1.2.11-GCCcore-11.2.0What happened? The module command is teling us that it swapped out

GCC/12.3.0 and replaced it with GCC/11.2.0

OUTPUT

gcc (GCC) 11.2.0

Copyright (C) 2021 Free Software Foundation, Inc.

This is free software; see the source for copying conditions. There is NO

warranty; not even for MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE.can’t load a new module version?

Sometimes the module command gives a warning requiring you to unload

the current version of a module before loading the new version. As

switching between different versions of the same module is often used

you can use module swap rather than unloading one version

before loading another. The equivalent of the steps above would be:

BASH

[userid@login01 ~]$ module purge

[userid@login01 ~]$ module load GCC

[userid@login01 ~]$ module swap GCC GCC/11.2.0OUTPUT

The following have been reloaded with a version change:

1) GCC/12.3.0 => GCC/11.2.0 3) binutils/2.40-GCCcore-12.3.0 => binutils/2.37-GCCcore-11.2.0

2) GCCcore/12.3.0 => GCCcore/11.2.0 4) zlib/1.2.13-GCCcore-12.3.0 => zlib/1.2.11-GCCcore-11.2.0And what happens when we load python again?

OUTPUT

The following have been reloaded with a version change:

1) GCC/11.2.0 => GCC/7.3.0-2.30 3) binutils/2.37-GCCcore-11.2.0 => binutils/2.30-GCCcore-7.3.0

2) GCCcore/11.2.0 => GCCcore/7.3.0 4) zlib/1.2.11-GCCcore-11.2.0 => zlib/1.2.11-GCCcore-7.3.0Because the version of python isn’t compatible with the currently loaded version of gcc, module has done a swap for us.

system python

Watch out for system provided python, it may not be the version you

need. It’s best to always specify your python version. On Rocket, the

default version of Python is Python2. To confirm the version type

python --version which, on Rocket, will return

Python 2.7.5.

- “Load software with

module load softwareName.” - “Unload software with

module unloadormodule purge” - “The module system handles software versioning and package conflicts for you automatically.”

Content from Transferring files with remote computers

Last updated on 2025-03-11 | Edit this page

Overview

Questions

- How do I transfer files to (and from) the cluster?

Objectives

-

wgetandcurl -Odownload a file from the internet. -

scptransfers files to and from your computer. -

rsynctransfers within the filesystem as well as to and from your computer

Required Files

The files used in this example can be retrieved using wget or a browser on your laptop and then copied to the remote cluster.

Using wget:

BASH

[userid@login01 ~]$ wget http://training.researchcomputing.ncl.ac.uk/training-materials/hpc-intro-data.tar.gzUsing a web browser:

http://training.researchcomputing.ncl.ac.uk/training-materials/hpc-intro-data.tar.gz

A remote cluster offers very limited use if we cannot get files to or from it. There are several options for transferring data between computing resources, from command line options to GUI programs, which we will cover here.

Download Files From the Internet

One of the most straightforward ways to download files is to use

either curl or wget, one of these is usually

installed in most Linux shells, on Mac OS terminal and in GitBash. Any

file that can be downloaded in your web browser through a direct link

can be downloaded using curl -O or wget. This

is a quick way to download datasets or source code.

The syntax for these commands is:

curl -O https://some/link/to/a/file and

wget https://some/link/to/a/file. Try it out by downloading

some material we’ll use later on, from a terminal on your local

machine.

BASH

[user@laptop ~]$ curl -O http://training.researchcomputing.ncl.ac.uk/training-materials/hpc-intro-data.tar.gzor

BASH

[user@laptop ~]$ wget http://training.researchcomputing.ncl.ac.uk/training-materials/hpc-intro-data.tar.gz

tar.gz?

This is an archive file format, just like .zip, commonly

used and supported by default on Linux, which is the operating system

the majority of HPC cluster machines run. You may also see the extension

.tgz, which is exactly the same. We’ll talk more about

“tarballs,” since “tar-dot-g-z” is a mouthful, later on.

Transferring Single Files and Folders With scp

To copy a single file to or from the cluster, we can use

scp (“secure copy”). The syntax can be a little complex for

new users, but we’ll break it down.

To upload to a remote computer:

To download from a remote computer:

Note that everything after the : is optional. If you

don’t specify a path on the remote computer, the file will be

transferred to your home directory. It’s a good idea to be clear about

where you are putting the file though, so use ~/ to upload

to the top level in your home directory. (using the handy ~

as shorthand for your home directory.)

Upload a File

Copy the file you just downloaded from the Internet to your home directory on Rocket.

Can you download to the cluster directly?

Try downloading the file directly using curl or

wget. Do the commands understand file locations on your

local machine over SSH? Note that it may well fail, and that’s OK!

Using curl or wget commands like the

following:

BASH

[user@laptop ~]$ ssh userid@rocket.hpc

[userid@login01 ~]$ curl -O http://training.researchcomputing.ncl.ac.uk/training-materials/hpc-intro-data.tar.gz

or

[userid@login01 ~]$ wget http://training.researchcomputing.ncl.ac.uk/training-materials/hpc-intro-data.tar.gzDid it work? If not, what does the terminal output tell you about what happened?

Why Not Download Directly to the cluster?

Some computer clusters are behind firewalls set to only allow

transfers initiated from the outside. This means that the

curl command will fail, as an address outside the firewall

is unreachable from the inside. To get around this, run the

curl or wget command from your local machine

to download the file, then use the scp command (just below

here) to upload it to the cluster.

Transferring a Directory

To copy a whole directory, we add the -r flag, for

“recursive”: copy the item specified, and every item

below it, and every item below those… until it reaches the bottom of the

directory tree rooted at the folder name you provided.

Caution

For a large directory — either in size or number of files — copying

with -r can take a long time to complete.

What’s in a /?

When using scp, you may have noticed that a

: always follows the remote computer name;

sometimes a / follows that, and sometimes not, and

sometimes there’s a final /. On Linux computers,

/ is the root directory, the

location where the entire filesystem (and others attached to it) is

anchored. A path starting with a / is called

absolute, since there can be nothing above the root

/. A path that does not start with / is called

relative, since it is not anchored to the root.

If you want to upload a file to a location inside your home directory

— which is often the case — then you don’t need a leading

/. After the :, start writing the sequence of

folders that lead to the final storage location for the file or, as

mentioned above, provide nothing if your home directory is the

destination.

A trailing slash on the target directory is optional, and has no

effect for scp -r, but is important in other commands, like

rsync.

Windows Users - Transferring Files interactively with MobaXterm

MobaXterm is a free ssh client. It allows connections via a ‘jump host’ so can even be used from home.

Transferring files to and from Campus Storage for Research Data (RDW)

RDW is mounted on Rocket at /rdw. You can use scp and

rsync to transfer data to RDW in the same way as copying to any other

directory on Rocket.

Using cp to copy to RDW

Because /rdw is a mounted filesystem, we can use

cp instead of scp:

BASH

[userid@login01 ~]$ cp file.txt /rdw/03/rse-hpc/training/userid/

[userid@login01 rse-hpc]$ cd /rdw/03/rse-hpc/training/userid/

[userid@login01 userid]$ pwdOUTPUT

/rdw/03/rse-hpc/training/useridOUTPUT

file.txtUsing rsync to copy to RDW

As you gain experience with transferring files, you may find the

scp command limiting. The rsync utility provides advanced

features for file transfer and is typically faster compared to both

scp and sftp (see below). It is especially

useful for transferring large and/or many files and creating synced

backup folders. The syntax is similar to cp and

scp. Rsync can be used on a locally mounted filesystem or a

remote filesystem.

Transfer to RDW from your work area on Rocket

Try out a dry run:

BASH

[userid@login01 ~]$ cd /nobackup/proj/training/userid/

[userid@sb024 userid]$ mkdir TestDir

[userid@sb024 userid]$ touch TestDir/testfile1

[userid@sb024 userid]$ touch TestDir/testfile2

[userid@login01 userid]$ rsync -av TestDir /rdw/03/rse-hpc/training/userid --dry-runOUTPUT

sending incremental file list

TestDir/

TestDir/testfile1

TestDir/testfile2

sent 121 bytes received 26 bytes 294.00 bytes/sec

total size is 0 speedup is 0.00 (DRY RUN)Run ‘for real’:

OUTPUT

sending incremental file list

created directory /rdw/03/rse-hpc/training/userid

rsync: chgrp "/rdw/03/rse-hpc/training/userid/TestDir" failed: Invalid argument (22)

TestDir/

TestDir/testfile1

TestDir/testfile2

rsync: chgrp "/rdw/03/rse-hpc/training/userid/TestDir/.testfile1.ofeRqX" failed: Invalid argument (22)

rsync: chgrp "/rdw/03/rse-hpc/training/userid/TestDir/.testfile2.fS1m6j" failed: Invalid argument (22)

sent 197 bytes received 415 bytes 408.00 bytes/sec

total size is 0 speedup is 0.00

rsync error: some files/attrs were not transferred (see previous errors) (code 23) at main.c(1179) [sender=3.1.2]What happened? rsync returned an error.

files/attrs were not transferred This is because RDW

doesn’t ‘know’ about Rocket’s groups. The transfer was successful

though! Only the ‘group’ attribute of the file couldn’t be transferred.

RDW has ‘trumped’ our local permissions and imposed its own standard

permissions. This isn’t important, the correct user keeps ownership of

the files.

OUTPUT

total 0

-rw------- 1 userid rockhpc_training 0 Mar 11 20:06 testfile1

-rw------- 1 userid rockhpc_training 0 Mar 11 20:06 testfile2

OUTPUT

total 33

-rwxrwx--- 1 userid domainusers 0 Mar 11 20:10 testfile1

-rwxrwx--- 1 userid domainusers 0 Mar 11 20:10 testfile2It’s still easier to read output without errors that we have to ignore, so let’s remove that error.

The -a (archive) option preserves permissions, this is

why we see group modification errors above.

For Rocket and RDW, replace -av with

-rltv-r = recurse through subdirectories-l = copy symlinks-t = preserve timestamps-v = verbose

OUTPUT

sending incremental file list

./

testfile1

testfile2

sent 150 bytes received 57 bytes 414.00 bytes/sec

total size is 0 speedup is 0.00Spot the difference

Can you spot the difference betweent the 2 previous rsync commands?

Try ls -l on the destination.

OUTPUT

/rdw/03/rse-hpc/training/userid/:

TestDir testfile1 testfile2

/rdw/03/rse-hpc/training/userid/TestDir:

testfile1 testfile2

We now have too many files! The first rsync command copied

TestDir because there was no trailing /.

The second rsync command only copied the contents of

TestDir because of the trailing /.

We could have spotted this by looking at the output of

--dry-run but this shows it’s a good idea to check the

destination after you copy.

Large data copies

When copying large amounts of data, rsync really comes into its own. When you’re copying a lot of data, it’s important to keep track in case the copy is interrupted. Rsync is great because it can pick up where it left off, rather than starting the copy all over again. It’s also useful to output to a log so you can see what was transferred and find any errors that need to be addressed.

Fast Connections

Transfers from Rocket to RDW don’t leave our fast data centre network. If you’re using rsync with a fast network or disk to disk in the same machine:

- DON’T use compression

-z - DO use

--inplace

Why? compression uses lots of CPU, Rsync usually creates a temp file

on disk before copying. For fast transfers, this places too much load on

the CPU and hard drive.--inplace tells rsync not to create the temp file but send

the data straight away. It doesn’t matter if the connection is

interrupted, because rsync keeps track and tries again. Always re-run

transfer command to ensure nothing was missed. The second run should be

very fast, just listing all the files and not copying anything.

Slow Connections

For a slow connection like the internet:

- DO use compression

-z - DON’T use

--inplace.

Large Transfer to RDW

RDW has a super-fast connection to Rocket, which means that it takes more resource to compress and un-compress the data than it does to do the transfer. What command would best for backing up a large amount of data from Rocket to RDW?

add a dry run and a log file

Try out a dry run:

BASH

rsync --dry-run -rltv --inplace --itemize-changes --progress --stats --whole-file --size-only /nobackup/myusername/source /rdw/path/to/my/share/destination/ 2>&1 | tee /home/myusername/meaningful-log-name.log1Run ‘for real’:

BASH

rsync -rltv --inplace --itemize-changes --progress --stats --whole-file --size-only /nobackup/myusername/source /rdw/path/to/my/share/destination/ 2>&1 | tee /home/myusername/meaningful-log-name.log2-

--inplace --whole-file --size-onlyspeed up transfer and prevent rsync filling up space with a large temporary directory

-

--itemize-changes --progress --statsfor more informative output

- Remember

|from the Unix Shell workshop?| teesends output both to the screen and to a log file

- All the arguments can be single letters like

-vor full words like--verbose. Useman rsyncto craft your favourite arguments list.

A Note on Ports

All file transfers using the above methods use SSH to encrypt data

sent through the network. So, if you can connect via SSH, you will be

able to transfer files. By default, SSH uses network port 22. If a

custom SSH port is in use, you will have to specify it using the

appropriate flag, often -p, -P, or

--port. Check --help or the man

page if you’re unsure.

Archiving Files

One of the biggest challenges we often face when transferring data between remote HPC systems is that of large numbers of files. There is an overhead to transferring each individual file and when we are transferring large numbers of files these overheads combine to slow down our transfers to a large degree.

The solution to this problem is to archive multiple files into smaller numbers of larger files before we transfer the data to improve our transfer efficiency. Sometimes we will combine archiving with compression to reduce the amount of data we have to transfer and so speed up the transfer.

The most common archiving command you will use on a (Linux) HPC

cluster is tar. tar can be used to combine

files into a single archive file and, optionally, compress it.

Let’s start with the file we downloaded from the lesson site,

hpc-lesson-data.tar.gz. The “gz” part stands for

gzip, which is a compression library. Reading this file name,

it appears somebody took a folder named “hpc-lesson-data,” wrapped up

all its contents in a single file with tar, then compressed

that archive with gzip to save space. Let’s check using

tar with the -t flag, which prints the

“table of contents” without unpacking the file,

specified by -f <filename>, on the remote computer.

Note that you can concatenate the two flags, instead of writing

-t -f separately.

BASH

[user@laptop ~]$ ssh userid@rocket.hpc

[userid@login01 ~]$ tar -tf hpc-lesson-data.tar.gz

hpc-intro-data/

hpc-intro-data/north-pacific-gyre/

hpc-intro-data/north-pacific-gyre/NENE01971Z.txt

hpc-intro-data/north-pacific-gyre/goostats

hpc-intro-data/north-pacific-gyre/goodiff

hpc-intro-data/north-pacific-gyre/NENE02040B.txt

hpc-intro-data/north-pacific-gyre/NENE01978B.txt

hpc-intro-data/north-pacific-gyre/NENE02043B.txt

hpc-intro-data/north-pacific-gyre/NENE02018B.txt

hpc-intro-data/north-pacific-gyre/NENE01843A.txt

hpc-intro-data/north-pacific-gyre/NENE01978A.txt

hpc-intro-data/north-pacific-gyre/NENE01751B.txt

hpc-intro-data/north-pacific-gyre/NENE01736A.txt

hpc-intro-data/north-pacific-gyre/NENE01812A.txt

hpc-intro-data/north-pacific-gyre/NENE02043A.txt

hpc-intro-data/north-pacific-gyre/NENE01729B.txt

hpc-intro-data/north-pacific-gyre/NENE02040A.txt

hpc-intro-data/north-pacific-gyre/NENE01843B.txt

hpc-intro-data/north-pacific-gyre/NENE01751A.txt

hpc-intro-data/north-pacific-gyre/NENE01729A.txt

hpc-intro-data/north-pacific-gyre/NENE02040Z.txtThis shows a folder containing another folder, which contains a bunch

of files. If you’ve taken The Carpentries’ Shell lesson recently, these

might look familiar. Let’s see about that compression, using

du for “disk usage”.

Files Occupy at Least One “Block”

If the filesystem block size is larger than 36 KB, you’ll see a larger number: files cannot be smaller than one block.

Now let’s unpack the archive. We’ll run tar with a few

common flags:

-

-xto extract the archive -

-vfor verbose output -

-zfor gzip compression -

-ffor the file to be unpacked